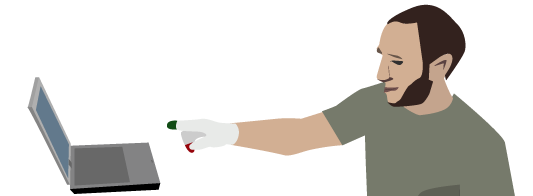

The user can point at the screen to click something, or use two fingers to scroll easily up and down a page. As has been discovered by many others creating novel interfaces it is very difficult to adapt a system which is defined for the very fine control of a mouse pointer. For this reason it is important to have an expressive set of gestures. Currently we only have gestures for moving the mouse pointer, scrolling and clicking, but these alone have made web browsing possible using this interface.

Our gesturing system is novel because we have the users gesture using what we are calling a plane of interaction (the AirTouch Plane). Gestures have different functions when they are in or out of this plane. This is the equivalent to the touching of a touch screen, a very popular interface metaphor.

The gesture system uses a homography to map the gestures given as input to the camera to a space behind the screen of the computer so that the user points to the place they mean on the screen and not to a place related to it on the camera.

For tracking we are using a modified version of the Continuously Adaptive Mean-Shift algorithm. We have made adjustments to the algorithm to automatically re-acquire a target when it is lost due to very quick movement and leaving/re-entering the frame.

This project was started as a group term project for Dr. Jason Corso‘s “Vision for HCI” course and was continued for about a year thereafter. Our group contained myself and Jeff Delmerico. AirTouch is no longer under active development.

Read More about AirTouch

- Schlegel, D.R. and Delmerico, J.A. 2010. Armchair Interface: Computer Vision for General HCI (Demo, Extended Abstract). Proceedings of Computer Vision and Pattern Recognition 2010, San Francisco, California USA, June 2010, 2 pages.

- Schlegel, D.R., Chen, A.Y.C., Xiong, C., Delmarico, J.A., and Corso, J.J. AirTouch: Interacting With Computer Systems At A Distance. Proceedings of the IEEE Workshop on Applications of Computer Vision, Kona, Hawaii, USA, January 2011, 8 pages.